Introducing the new Prometheus connector

December 5, 2024 - Lorenzo NicoraWe are excited to announce a new sink connector that enables writing data to Prometheus (FLIP-312). This articles introduces the main features of the connector, and the reasoning behind design decisions.

This connector allows writing data to Prometheus using the Remote-Write push interface, which lets you write time-series data to Prometheus at scale.

Motivations for a Prometheus connector #

Prometheus is an efficient time-series database optimized for building real-time dashboards and alerts, typically in combination with Grafana or other visualization tools.

Prometheus is commonly used for observability, to monitor compute resources, Kubernetes clusters, and applications. It can also be used to observe Flink clusters and jobs. The Flink Metric Reporter has exactly this purpose.

So, why do we need a connector?

The goal of the new Flink Prometheus connector is not to observe Flink. The goal is to use Flink as pre-processor for observability data coming from diverse systems, and be able to write this processed time-series directly to Prometheus.

Prometheus can serve as a general-purpose observability time-series database, beyond traditional infrastructure monitoring. For example, it can be used to monitor IoT devices, sensors, connected cars, media streaming devices, and any resource that streams events or measurements continuously.

Observability data from these use cases differs from metrics generated by compute resources. They present additional challenges:

- Out-of-order events: Devices may be connected via mobile networks or even Bluetooth. Events from different devices may follow different paths and arrive at very different times. A stateful, event-time logic can be used to reorder them.

- High frequency and high cardinality: You can have a sheer number of devices, each emitting signals multiple times per second. Aggregating over time and over dimensions can reduce frequency and cardinality and make the volume of data more efficiently analysable.

- Lack of contextual information: Raw events sent by the devices often lack of contextual information for a meaningful analysis. Enrichment of raw events, looking up some reference dataset, can be used to add dimensions useful for the analysis.

- Noise: sensor measurement may contain noise. For example when a GPS tracker loses connection and reports spurious positions. These obvious outliers can be filtered out to simplify visualization and analysis.

Flink can be used as a pre-processor to address all the above.

You can implement a sink from scratch or use AsyncIO to call the Prometheus Remote-Write endpoint. However, there are non-trivial details to implement an efficient Remote-Write client:

- There is no high-level client for Prometheus Remote-Write. You would need to build on top of a low-level HTTP client.

- Remote-Write can be inefficient unless write requests are batched and parallelized.

- Error handling can be complex, and specifications demand strict behaviors (see Strict Specifications, Lenient Implementations).

The new Prometheus connector manages all of this for you.

Note that registering some custom Flink metrics and having them pushed to Prometheus by the Metric Reporter is not really an option here, for multiple reasons. For example:

- The dimensions of these time-series are not known upfront and often have high cardinality, even after preprocessing. Think for example of a dimension

deviceIDwhere the value is the ID of the IoT device that is generating the measurement. This can easily be thousands. In Flink you would have to register a separate custom metric for each device. - A Flink custom metric will send to Prometheus a sample of the metric every fixed period. Usually several seconds. This happens when scraped, with the Prometheus reporter, or at the interval configured with the PrometheusPushGateway reporter. If you are processing measurements from devices you want to control this frequency.

- But the most fundamental reason is that these time-series are data from the point of view of the Flink application, and custom metrics are designed to observe how Flink process data, not for data itself.

Key features #

The version 1.0.0 of the Prometheus connector has the following features:

- DataStream API, Java Sink, based on AsyncSinkBase.

- Configurable write batching.

- Order is retained on retries.

- At-most-once guarantees (we’ll explain the reason for this later).

- Supports parallelism greater than 1.

- Configurable retry strategy for retryable server responses (e.g., throttling) and configurable behavior when the retry limit is reached.

Authentication #

Authentication is explicitly out of scope for Prometheus Remote-Write specifications. The connector provides a generic interface, PrometheusRequestSigner, to manipulate requests and add headers. This allows implementation of any authentication scheme that requires adding headers to the request, such as API keys, authorization, or signature tokens.

In the release 1.0.0, an implementation for Amazon Managed Service for Prometheus (AMP) is provided as a separate, optional dependency.

Designing the connector #

A sink to Prometheus differs from a sink to most other datastores or time-series databases. The wire interface is the easiest part; the main challenges arise from the differing design goals of Prometheus and Flink, as well as from the Remote-Write specifications. Let’s go through some of these aspects to better understand the behavior of this connector.

Data model and wire protocol #

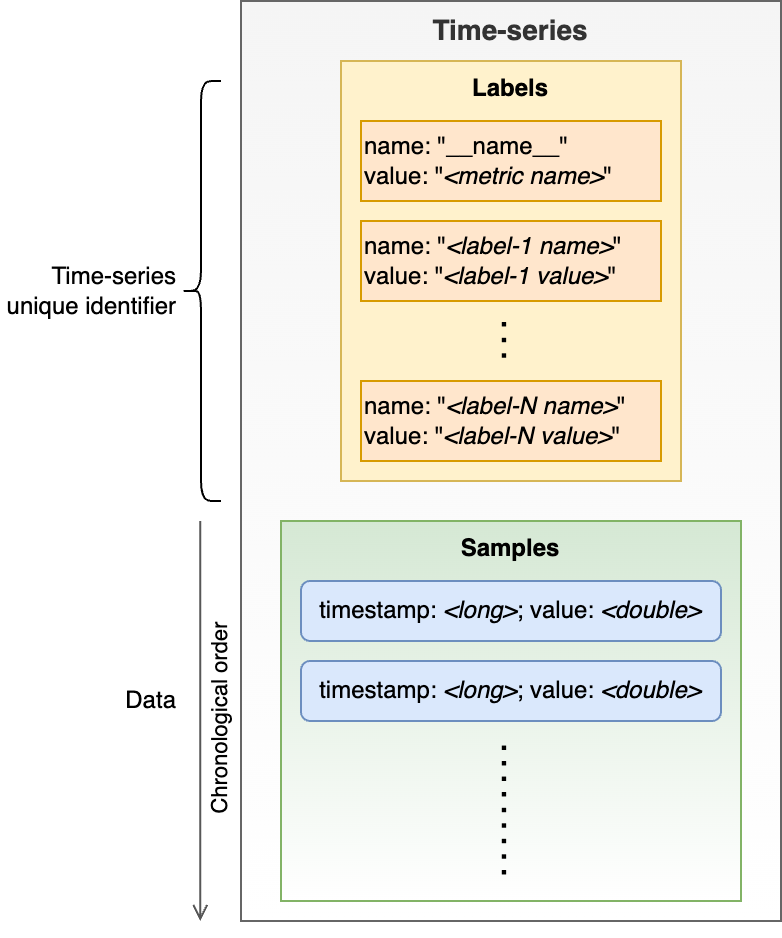

Prometheus is a dimensional time-series database. Each time-series is represented by a series of Samples (timestamp and value), identified by a set of unique Labels (key, value). Labels represents the dimensions with one label named __name__ playing the special role of metric name. The set of Labels represent the unique identifier of the time-series. Records (Samples) are simply the values (doubles) in order of timestamp (long, as UNIX timestamp since the Epoch).

Fig. 1 - Logical data model of the Prometheus TSDB.

The wire-protocol payload defined by the Remote-Write specifications allows batching in a single write request datapoints (samples) from multiple time-series, and multiple samples for each time-series. This the Protobuf definition of the single method exposed by the interface:

func Send(WriteRequest)

message WriteRequest {

repeated TimeSeries timeseries = 1;

// [...]

}

message TimeSeries {

repeated Label labels = 1;

repeated Sample samples = 2;

}

message Label {

string name = 1;

string value = 2;

}

message Sample {

double value = 1;

int64 timestamp = 2;

}

The POST payload is serialized as Protobuf 3, compressed with Snappy.

Specifications constraints #

Specifications do not impose any constraints on repeating TimeSeries with the same set of Label, but it does require strict ordering of Sample belonging to the same time-series (by timestamp) and Label in a single TimeSeries (by name).

The term “time-series” is overloaded, referring to both:

- A unique series of samples in the datastore, identified by a unique set of labels, and

TimeSeriesas a block of theWriteRequest.The two concepts are obviously related, but a

WriteRequestmay contain multipleTimeSerieselements referring to the same datastore time-series.

Prometheus design principles vs Flink design principles #

Flink is designed with a consistency-first approach. By default, any unexpected error causes the streaming job to fail and restart from the last checkpoint.

In contrast, Prometheus is designed with an availability-first approach, prioritizing fast ingestion over strict consistency. When a write request contains malformed entries, the entire request must be discarded and not retried. If you retry, Prometheus will keep rejecting your write, so there is no point doing it. Additionally, samples belonging to the same time series (with the same dimensions or labels) must be written in strict timestamp order.

You may have already spotted the issue: any malformed or out-of-order samples can act as a “poison pill” unless you drop the offending request and proceed.

Strict specifications, lenient implementations #

The Remote-Write specifications are very strict regarding the ordering of Labels within a request, the ordering of Samples belonging to the same time-series, and preventing from retrying rejected requests.

However, many Prometheus backend implementations are more lenient. They may support a limited, configurable out-of-order time window and may not enforce strict label ordering as long as other rules are followed.

As a result, a connector that strictly enforces the Remote-Write specifications may limit the user’s flexibility compared to what their specific Prometheus implementation allows.

Error-handling and guarantees #

As we have seen, the Remote-Write specifications require that certain rejected writes—specifically those related to malformed and out-of-order samples (typically 400 responses)—must not be retried. The connector has no choice but to discard the entire offending request and continue with the next record. The specifications do not define a standard response format, and there is no reliable way to automatically identify and selectively discard the offending samples.

The connector itself does not introduce out-of-order writes. Also, a KeySelector is provided to keyBy the stream sent to the connector to ensure the input order is not lost. Upstream of this point partitioning data correctly, identifying and resolving out-of-order data. See User’s responsibilities, below.

For this reason, this is an at-most-once connector.

If data loss is unavoidable, the best the connector can do is to make data loss very evident to the user. Each time a request is dropped, a WARN log is emitted, containing the endpoint’s response (which provides an indication of the issue) and incrementing counters for the number of dropped samples and requests.

Not that, when the job restarts from checkpoints, some duplicate writes inevitably happen and they are discarded. As a user, you can safely ignore them.

Scaling writes: batching, parallelizing, and ordering #

There are two ways to scale write throughput to Prometheus: batching multiple samples into a single write request and parallelizing the writes.

Parallelizing poses challenges due to the ordering requirements imposed by Prometheus. You must ensure that all samples belonging to the same time series (defined as records with an identical set of labels) are written by the same thread.

Additionally, max inflight request is fixed to 1 for the underlying Async Sink.

Otherwise, you risk accidental out-of-order writes, even if your data is initially in order. The connector handles this by ensuring that writes are never retried asynchronously. However, it is the user’s responsibility to partition the data before reaching the sink to preserve the correct order.

User’s responsibilities #

As always, giving users more control also brings more responsibility. Since the actual ordering constraints depend on the specific Prometheus backend implementation, the connector grants users the freedom (and the responsibility) to ensure they are sending valid data.

If the data is invalid, Prometheus will reject the write request, and the Flink job will continue. Logs and metrics will indicate that something is wrong.

In particular, it is the user’s responsibility to correctly partition the data within the Flink application and upstream so that the order per time series is maintained. Failing to do so will result in some data being dropped. That said, dropping a few data points is generally not an issue for observability use cases (if your use case requires zero data loss, you should probably consider a different time-series database). Additionally, we’ve observed that some Prometheus backends may occasionally drop writes, even when they are rigorously sent in order—especially when writing at high parallelism and pushing throughput to the limits of the backend resources.

Testing the connector #

Testing the connector posed some interesting challenges. Beyond typical unit test coverage, we implemented several “integration tests” to examine the stack of the error handler, retry policy, and HTTP client together. However, the most challenging aspect was testing behavior under error conditions and at scale. We quickly realized there was no simple way to test many of these error conditions automatically using a containerized Prometheus, especially not at scale.

We decided to prioritize a manual testing plan, covering over 40 combinations of configurations and error conditions. We ran tests with Prometheus in Docker, and with Amazon Managed Service for Apache Flink and Amazon Managed Service for Prometheus to easily test at scale.

In particular, the unhappy scenarios that have been tested include:

- Malformed (label names violating the specifications), and out of order writes.

- Restart from savepoint and from checkpoint.

- Behavior under backpressure, simulated exceeding the destination Prometheus quota, letting the connector retrying forever after being throttled.

- Maximum number of retries is exceeded, also simulated via Prometheus throttling, but with a low maximum retries value. The connector behavior in this case is configurable, so both “fail” (job fails and restart) and “discard and continue” behaviors have been tested.

- Remote-write endpoint is not reachable.

- Remote-write authentication fails.

- Inconsistent configuration, such as (numeric) parameters outside the expected range.

To facilitate testing we created a data generator, a separate Flink application, capable of generating semi-random data and of introducing specific errors, like out-of-order samples. The generator can also produce a high volume of data for load and stress testing. One aspect of the test harness requiring special attention was not losing ordering. For example, we used Kafka between the generator and the writer application. Kinesis was not an option due to its lack of strict ordering guarantees.

Finally, the connector was also stress-tested, writing up to 1,000,000 samples per second with 1,000,000 cardinality (distinct time-series). 1 million is where we stopped testing, not a limit of the connector itself. This throughput has been achieved with parallelism between 24 and 32, depending on the number of samples per input record. Obviously, your mileage may vary, depending on your Prometheus backend, the samples per record, and the number of dimensions.

Future improvements #

There are a couple of obvious improvements for future releases:

- Table API/SQL support

- Optional validation of input data

Both these features were considered for the first release, but excluded due to the challenges they would pose. Let’s go through some of these considerations.

Consideration about Table API /SQL interface #

The structure of the record that Prometheus expects is hierarchical. A TimeSeries contains multiple Labels (key-value pairs, which essentially form the primary key of the record), and multiple Samples, which hold the actual measurement value and the timestamps. The maximum number of Labels depends on the Prometheus implementation, and a TimeSeries can have tens of Labels. Additionally, a single TimeSeries record can contain hundreds of Samples.

This type of structured record is not easily represented in a table abstraction.

A user-friendly Table API implementation would require flattening this structure, imposing a reasonable limit on the number of Labels, and including only a single Sample per record. While this does not prevent the sink from batching multiple records into a single write request, it would limit user flexibility and scalability.

Considerations about data validation #

Data validation would be a convenient feature, allowing to discard or send to a “dead letter queue” invalid records, before the sink attempts the write request that would be rejected by Prometheus. This would reduce data loss in case of malformed data, because Prometheus rejects the entire write request (the batch) regardless of the number of offending records.

However, validating well-formed input would come with a significant performance cost. It would require checking every Label with a regular expression, and checking the ordering of the list of Labels, on every single input record.

Additionally, checking Sample ordering in the sink would not allow reordering, unless you introduce some form of longer windowing that would inevitably increase latency. If latency is not a problem, some form of reordering can be implemented by the user, upstream of the connector.

List of Contributors #

Lorenzo Nicora, Hong Teoh, Francisco Morillo, Karthi Thyagarajan