Scala Free in One Fifteen

February 22, 2022 - Seth Wiesman (@sjwiesman)Flink 1.15 is right around the corner, and among the many improvements is a Scala free classpath. Users can now leverage the Java API from any Scala version, including Scala 3!

This blog will discuss what has historically made supporting multiple Scala versions so complex, how we achieved this milestone, and the future of Scala in Apache Flink.

flink-scala jar.

To remove Scala from the user-code classpath, remove this jar from the lib directory of the Flink distribution.

$ rm flink-dist/lib/flink-scala*The Classpath and Scala #

If you have worked with a JVM-based application, you have probably heard the term classpath. The classpath defines where the JVM will search for a given classfile when it needs to be loaded. There may only be one instance of a classfile on each classpath, forcing any dependency Flink exposes onto users. That is why the Flink community works hard to keep our classpath “clean” - or free of unnecessary dependencies. We achieve this through a combination of shaded dependencies, child first class loading, and a plugins abstraction for optional components.

The Apache Flink runtime is primarily written in Java but contains critical components that forced Scala on the default classpath. And because Scala does not maintain binary compatibility across minor releases, this historically required cross-building components for all versions of Scala. But due to many reasons - breaking changes in the compiler, a new standard library, and a reworked macro system - this was easier said than done.

Hiding Scala #

As mentioned above, Flink uses Scala in a few key components; Mesos integration, the serialization stack, RPC, and the table planner. Instead of removing these dependencies or finding ways to cross-build them, the community hid Scala. It still exists in the codebase but no longer leaks into the user code classloader.

In 1.14, we took our first steps in hiding Scala from our users. We dropped the support for Apache Mesos, partially implemented in Scala, which Kubernetes very much eclipsed in terms of adoption. Next, we isolated our RPC system into a dedicated classloader, including Akka. With these changes, the runtime itself no longer relied on Scala (hence why flink-runtime lost its Scala suffix), but Scala was still ever-present in the API layer.

These changes, and the ease with which we implemented them, started to make people wonder what else might be possible. After all, we isolated Akka in less than a month, a task stuck in the backlog for years, thought to be too time-consuming.

The next logical step was to decouple the DataStream / DataSet Java APIs from Scala. This primarily entailed the few cleanups of some test classes but also the identifying of code paths that are only relevant for the Scala API. These paths were then migrated into the Scala API modules and only used if required.

For example, the Kryo serializer, which we always extended to support certain Scala types, now only includes them if an application uses the Scala APIs.

Finally, it was time to tackle the Table API, specifically the table planner, which contains 378,655 lines of Scala code at the time of writing. The table planner provides parsing, planning, and optimization of SQL and Table API queries into highly optimized Java code. It is the most extensive Scala codebase in Flink and it cannot be ported easily to Java. Using what we learned from building dedicated classloaders for the RPC stack and conditional classloading for the serializers, we hid the planner behind an abstraction that does not expose any of its internals, including Scala.

The Future of Scala in Apache Flink #

While most of these changes happened behind the scenes, they resulted in one very user-facing change: removing many scala suffixes. You can find a list of all dependencies that lost their Scala suffix at the end of this post12.

Additionally, changes to the Table API required several changes to the packaging and the distribution, which some power users relying on the planner internals might need to adapt to3.

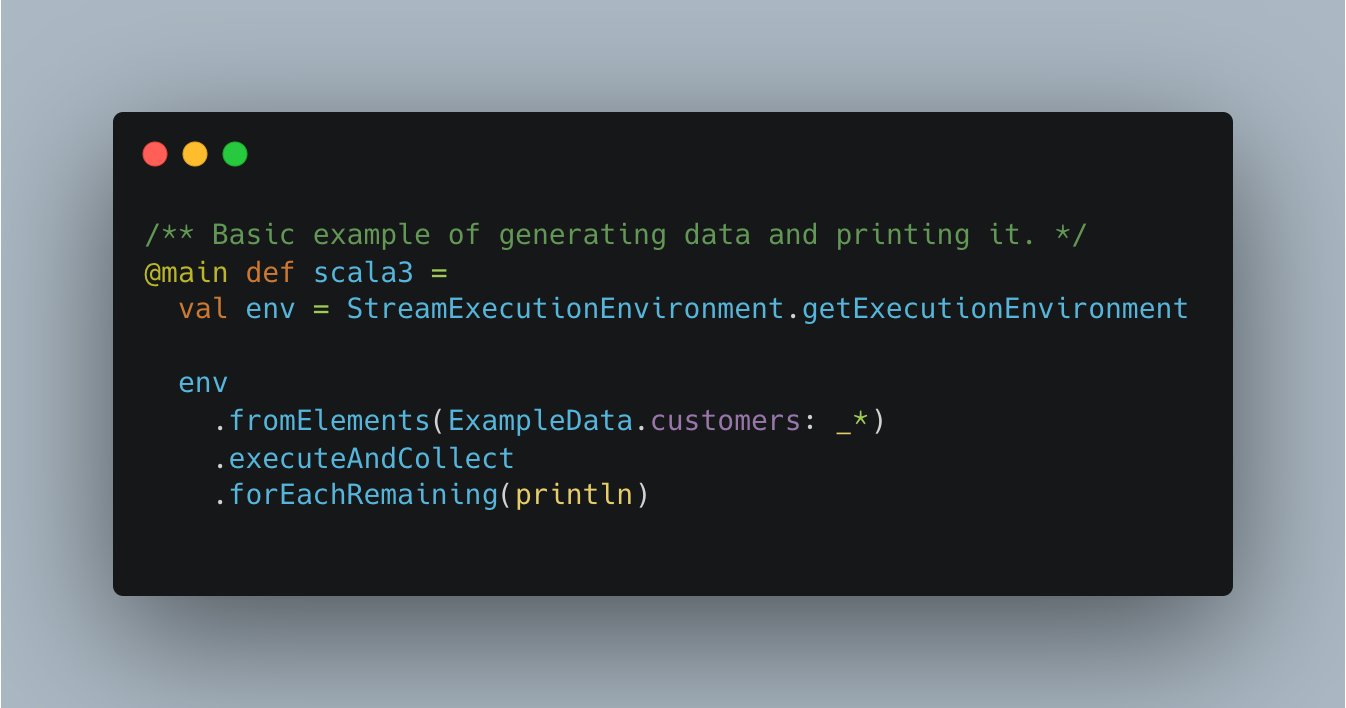

Going forward, Flink will continue to support Scala packages for the DataStream and Table APIs compiled against Scala 2.12 while the Java API is now unlocked for users to leverage components from any Scala version. We are already seeing new Scala 3 wrappers pop up in the community are excited to see how users leverage these tools in their streaming pipelines456!

-

flink-cep, flink-clients, flink-connector-elasticsearch-base, flink-connector-elasticsearch6, flink-connector-elasticsearch7, flink-connector-gcp-pubsub, flink-connector-hbase-1.4, flink-connector-hbase-2.2, flink-connector-hbase-base, flink-connector-jdbc, flink-connector-kafka, flink-connector-kinesis, flink-connector-nifi, flink-connector-pulsar, flink-connector-rabbitmq, flink-connector-testing, flink-connector-twitter, flink-connector-wikiedits, flink-container, flink-dstl-dfs, flink-gelly, flink-hadoop-bulk, flink-kubernetes, flink-runtime-web, flink-sql-connector-elasticsearch6, flink-sql-connector-elasticsearch7, flink-sql-connector-hbase-1.4, flink-sql-connector-hbase-2.2, flink-sql-connector-kafka, flink-sql-connector-kinesis, flink-sql-connector-rabbitmq, flink-state-processor-api, flink-statebackend-rocksdb, flink-streaming-java, flink-table-api-java-bridge, flink-test-utils, flink-yarn, flink-table-runtime, flink-table-api-java-bridge ↩︎

-

https://nightlies.apache.org/flink/flink-docs-master/docs/dev/configuration/overview/#which-dependencies-do-you-need ↩︎

-

https://nightlies.apache.org/flink/flink-docs-master/docs/dev/configuration/advanced/#anatomy-of-table-dependencies ↩︎