Flink Community Update - May'20

May 6, 2020 - Marta Paes (@morsapaes)Can you smell it? It’s release month! It took a while, but now that we’re all caught up with the past, the Community Update is here to stay. This time around, we’re warming up for Flink 1.11 and peeping back to the month of April in the Flink community — with the release of Stateful Functions 2.0, a new self-paced Flink training and some efforts to improve the Flink documentation experience.

Last month also marked the debut of Flink Forward Virtual Conference 2020: what did you think? If you missed it altogether or just want to recap some of the sessions, the videos and slides are now available!

The Past Month in Flink #

Flink Stateful Functions 2.0 is out! #

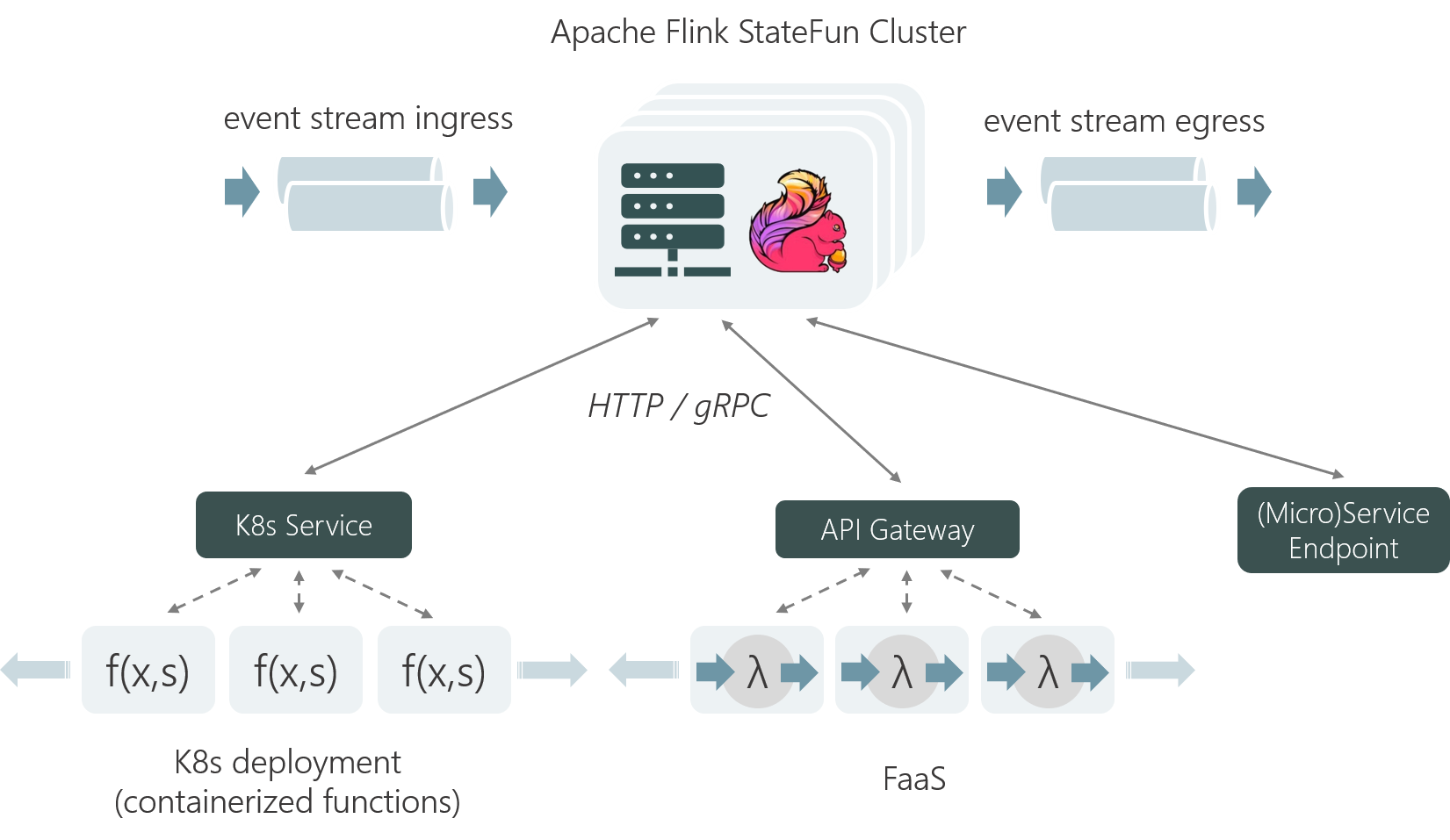

In the beginning of April, the Flink community announced the release of Stateful Functions 2.0 — the first as part of the Apache Flink project. From this release, you can use Flink as the base of a (stateful) serverless platform with out-of-the-box consistent and scalable state, and efficient messaging between functions. You can even run your stateful functions on platforms like AWS Lambda, as Gordon (@tzulitai) demonstrated in his Flink Forward talk.

It’s been encouraging to see so many questions about Stateful Functions popping up in the mailing list and Stack Overflow! If you’d like to get involved, we’re always looking for new contributors — especially around SDKs for other languages like Go, Javascript and Rust.

Warming up for Flink 1.11 #

The final preparations for the release of Flink 1.11 are well underway, with the feature freeze scheduled for May 15th, and there’s a lot of new features and improvements to look out for:

-

On the usability side, you can expect a big focus on smoothing data ingestion with contributions like support for Change Data Capture (CDC) in the Table API/SQL (FLIP-105), easy streaming data ingestion into Apache Hive (FLIP-115) or support for Pandas DataFrames in PyFlink (FLIP-120). A great deal of effort has also gone into maturing PyFlink, with the introduction of user defined metrics in Python UDFs (FLIP-112) and the extension of Python UDF support beyond the Python Table API (FLIP-106,FLIP-114).

-

On the operational side, the much anticipated new Source API (FLIP-27) will unify batch and streaming sources, and improve out-of-the-box event-time behavior; while unaligned checkpoints (FLIP-76) and changes to network memory management will allow to speed up checkpointing under backpressure — this is part of a bigger effort to rethink fault tolerance that will introduce many other non-trivial changes to Flink. You can learn more about it in this recent Flink Forward talk!

Throw into the mix improvements around type systems, the WebUI, metrics reporting, supported formats and…we can’t wait! To get an overview of the ongoing developments, have a look at this thread. We encourage the community to get involved in testing once an RC (Release Candidate) is out. Keep an eye on the @dev mailing list for updates!

Flink Minor Releases #

Flink 1.9.3 #

The community released Flink 1.9.3, covering some outstanding bugs from Flink 1.9! You can find more in the announcement blogpost.

Flink 1.10.1 #

Also in the pipeline is the release of Flink 1.10.1, already in the RC voting phase. So, you can expect Flink 1.10.1 to be released soon!

New Committers and PMC Members #

The Apache Flink community has welcomed 3 PMC Members and 2 new Committers since the last update. Congratulations!

New PMC Members #

New Committers #

The Bigger Picture #

A new self-paced Apache Flink training #

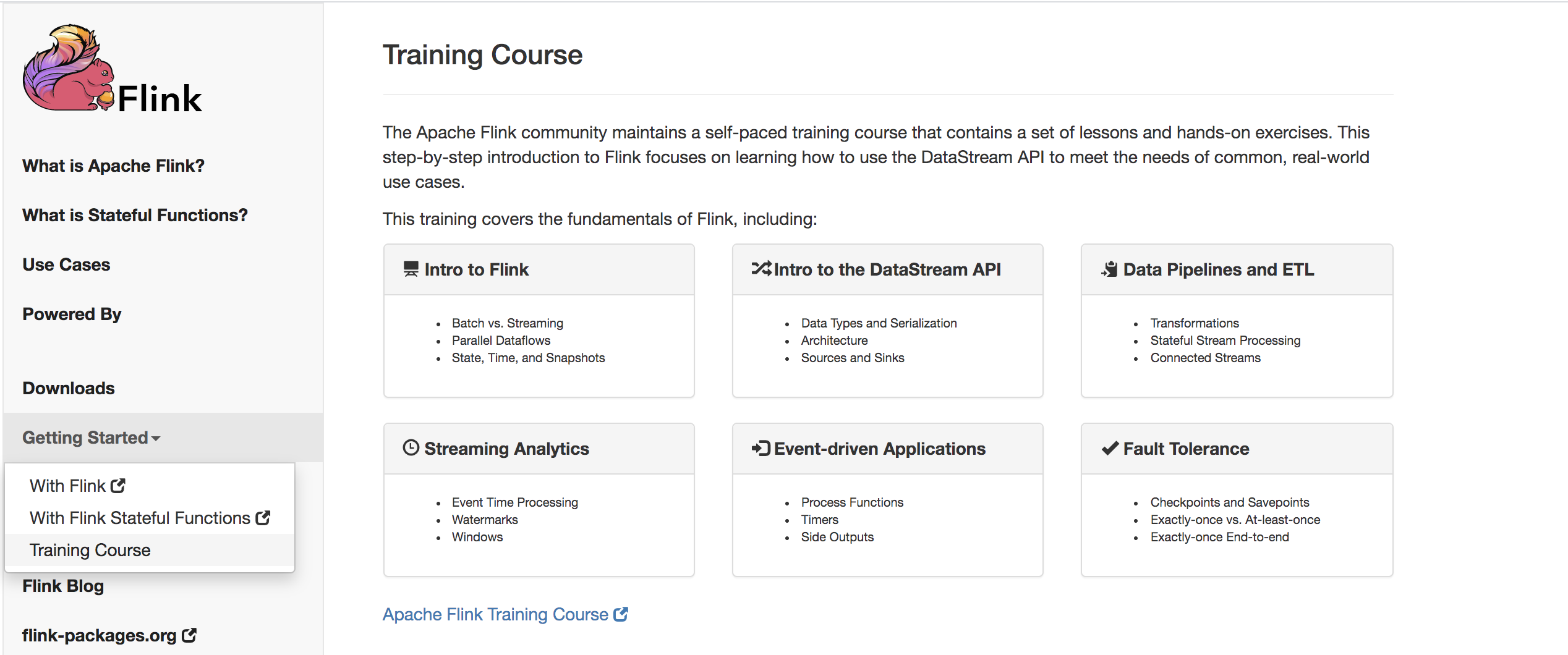

This week, the Flink website received the invaluable contribution of a self-paced training course curated by David (@alpinegizmo) — or, what used to be the entire training materials under training.ververica.com. The new materials guide you through the very basics of Flink and the DataStream API, and round off every concepts section with hands-on exercises to help you better assimilate what you learned.

Whether you’re new to Flink or just looking to strengthen your foundations, this training is the most comprehensive way to get started and is now completely open source: https://flink.apache.org/training.html. For now, the materials are only available in English, but the community intends to also provide a Chinese translation in the future.

Google Season of Docs 2020 #

Google Season of Docs (GSOD) is a great initiative organized by Google Open Source to pair technical writers with mentors to work on documentation for open source projects. Last year, the Flink community submitted an application that unfortunately didn’t make the cut — but we are trying again! This time, with a project idea to improve the Table API & SQL documentation:

1) Restructure the Table API & SQL Documentation

Reworking the current documentation structure would allow to:

-

Lower the entry barrier to Flink for non-programmatic (i.e. SQL) users.

-

Make the available features more easily discoverable.

-

Improve the flow and logical correlation of topics.

FLIP-60 contains a detailed proposal on how to reorganize the existing documentation, which can be used as a starting point.

2) Extend the Table API & SQL Documentation

Some areas of the documentation have insufficient detail or are not accessible for new Flink users. Examples of topics and sections that require attention are: planners, built-in functions, connectors, overview and concepts sections. There is a lot of work to be done and the technical writer could choose what areas to focus on — these improvements could then be added to the documentation rework umbrella issue (FLINK-12639).

If you’re interested in learning more about this project idea or want to get involved in GSoD as a technical writer, check out the announcement blogpost.

…and something to read! #

Events across the globe have pretty much come to a halt, so we’ll leave you with some interesting resources to read and explore instead. In addition to this written content, you can also recap the sessions from the Flink Forward Virtual Conference!

| Type | Links |

|---|---|

| Blogposts | |

| Tutorials | |

| Flink Packages |

Flink Packages is a website where you can explore (and contribute to) the Flink |

If you’d like to keep a closer eye on what’s happening in the community, subscribe to the Flink @community mailing list to get fine-grained weekly updates, upcoming event announcements and more.